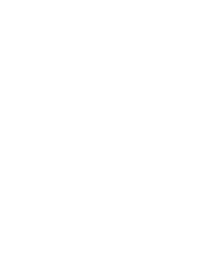

The whole world nowadays seems to be on the verge of a radical shift. Be it politics, economics, social systems, or technology; everywhere we are surrounded by clouds of uncertainty, promise, and renewal. Whether this shift is for the better or worse, remains to be seen but the cry of change in itself must not be perceived as a sign of impending disaster. Data used to be an asset before it became a liability as it became harder and harder to manage data integrity, and to ensure data availability while guarding privacy. In this context, the slogan “Make Data Great Again”, means leveraging and managing data as an asset.

Data is a source of sustainable value for enterprises. Likewise, people of a nation are its assets. “Make America Great Again” then should mean empowering the people of America.

The slogans are liked by many as these often capture popular sentiment. Slogans also make the subsequent discourse interesting to say the least but a deeper dive can also reveal interesting insights pointing to silver linings in the clouds of uncertainty.

Let us view the goal of ‘making data great again’ using a prism of slogans inspired by analogous populist slogans on the American political scene today and come up with few executive actions for the technology world.

BUILD THE WALL (or should it be a lake?)

I’m sure you are fed up hearing “build the data lake” a million times or perhaps pumped up if happen to be in a certain camp. Interestingly, while “Build the Wall” is about securing the perimeter, building a data lake requires freeing data from isolationist data empires and data hoarders within an organization. The power of data is unleashed only by enhancing its accessibility to masses. Once a data lake is built, it may also require a wall around it, but first things first.

We at DataFactZ, are beginning to see a rise in adoption rate of data lake concept in enterprises. Thanks to distributed architectures like Hadoop and the lake built on commodity hardware, one doesn’t have to spend millions for consolidating and storing data in a data lake. However, building a data lake requires a streamlined effort with a clear strategy and a roadmap. In practice, one can start with a small pond and evolve it into a lake.

Once you have a data lake built on a scalable, robust and performant infrastructure, the doors are opened for building a variety of applications on top of it to unleash the power of data. Whether you are dealing with advanced analytics, Mobile/Web applications, Content Management Systems, or Real -Time applications, to name a few, data starvation is no longer an issue.

Furthermore, governance structures such as MDM as well security and privacy walls can be constructed around the data lake. As an example, one can use graph data bases like Neo4J within the Hadoop ecosystem to link and correlate data from multiple enterprise sources to easily build an MDM layer. How cool is that?

Whatever you do, remember that Mexico is not going to pay you to build your data lake.

DEPORT THE UNDOCUMENTED (or should we introduce structure?)

Hidden data is like undocumented immigrants who are unable to fully participate in value creation. Most hidden data in organizations is in the form of unstructured data. Lack of visibility, structure, and consistent view of data is not only a missed opportunity for value capture but also at times outright risky and potentially detrimental to the well-being to the organization.

Before we can do anything with hidden data, the very first step is to expose it. This requires classifying, indexing, and introducing some structure so that it can be manipulated in an automated way. Not all hidden data is good or useful. We must have means to discriminate good and bad data. Furthermore, we must have a strategy to modify or purge bad data without impacting good data.

Text analytics, natural language processing, full-text indexing, and semantic technologies have matured enough to take advantage of unstructured data.

Completely purging or ignoring hidden data is a retro step and is more than likely to push the organization backwards.

REPEAL AFFORDABLE CARE ACT (or should we make it affordable?)

Even though the infrastructure for storing and processing data has become affordable, the overall cost of managing data is on the rise. As data proliferates and cost of managing it increases, there is a potential of deriving increased value from data which is proportionately higher than the rise in cost. However, this value is not realized for lack of policy, processes, or enforcement, the same data can become a huge liability.

As data exists in silos, majority of the enterprise applications require extensive data movement from one system to another. Data gets duplicated to multiple locations and stays out of sync. Traditional data warehouses reflect data which is at least a day older (D-1 version).

Leveraging modern data ingestion utilities build around data parallel storage and computing clusters, such as Hadoop, Spark, and Cassandra, to name a few, can significantly reduce the overall costs of managing and processing data. The main advantages include: reduced movement of data as many applications can now process the data in place. Data can be kept more current as it can be brought quickly into a data lake by using parallel data ingestion tools such as Sqoop and Flume. Tools like Apache NIFI can be used to capture data in real-time from multiplicity of IOT devices and other sources of data origination.

And of course, this does not have to be a “Repeal and Replace” mandate.

SCRAP EXISTING PARTNERSHIPS (or should we evaluate and refine?)

We are clearly witnessing the arrival of new vendors in the industry and the emergence of new business models. Reliable and time-tested partnerships are under stress because of this trend. Many enterprises have been utilizing traditional MPP type appliances or equivalent architectures to store all kinds of data. The most interesting fact around this topic is many enterprises are utilizing the above mentioned architectures for data dumps, staging or data warehouses etc., while paying millions of dollars for these data architectures.

Performance and Scalability has become one of the biggest pain points for the enterprise and even after extensive performance tuning/optimization the gains are minimal to none in many cases. So what should be long term strategy for the enterprises to have a data architecture that is scalable with state of the art performance? The writing is on the wall (No, we are not talking about that wall.).

And of course. “You did not inherit this mess”. These were best available options during that time.

EXECUTIVE ACTIONS FOR ENTERPRISES.

Here are few executive actions that an organization can take to Make Data Great Again:

- Adopt a strategic view of data lake initiatives

- Know your Data as you would like to know your business.

- Leverage modern scalable and affordable data processing infrastructure.

- Reevaluate existing vendors and partnerships in the context of changing business conditions.